New ALZ Accelerator: lessons learned

Principal Solution Architect, working daily with Azure, primarily focusing on automation and "everything as code".

This post was written for the Azure Spring Clean 2025 event. Visit the official website to see the schedule and links to great content.

Introduction

Azure Landing Zones (ALZ) have become the de facto standard for designing and implementing scalable, secure, and well-governed cloud environments. As an integral part of the Cloud Adoption Framework for Azure, the ALZ methodology has significantly evolved over the years. Today, it is supported by a vibrant community that engages through GitHub discussions and community calls.

The coding assets for ALZ have also matured and expanded to address diverse scenarios and tooling requirements. The latest release of the ALZ Accelerator simplifies the bootstrapping and provisioning of cloud platforms, covering everything from source control repositories and pipelines to Azure resources for platform landing zones.

In this blog post, I will share my experience implementing this new accelerator. I will compare it with the original accelerator (now referred to as “classic”), highlight the pros and cons of the design choices, and point out areas where we applied additional customizations, explaining the rationale behind these decisions.

Core features of ALZ Accelerator

If you wanted an ‘elevator pitch’ for the new ALZ Accelerator, we could say, it:

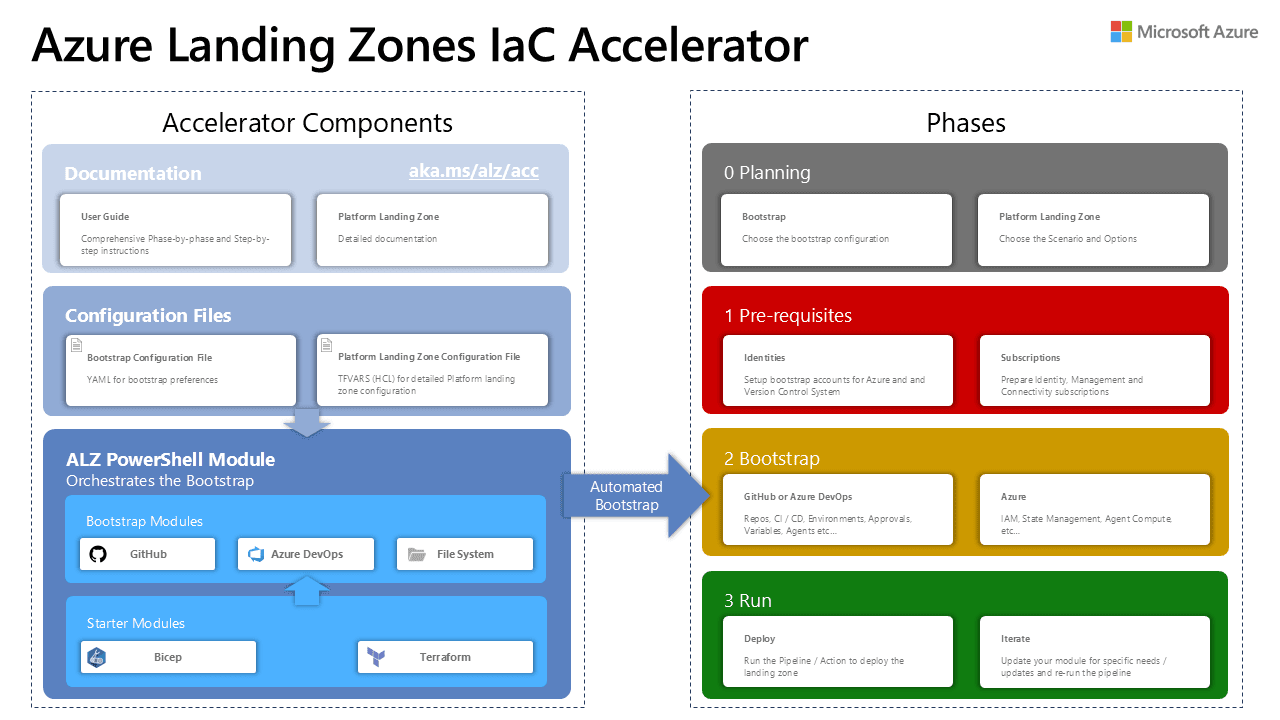

breaks down the entire ALZ deployment experience into four phases: first two - Planning and Pre-requisites - are manual ones, but the other two - Bootstrap and Run - are fully automated.

uses an opiniated approach for deploying and managing the platform landing zones of the ALZ reference architecture. The Accelerator engineering team made a lot of design decisions to simplify the experience by abstracting the complexity of deploying the entire ‘ALZ stack’. The Accelerator doesn’t deal with workload landing zones, and it doesn’t integrate directly with the Vending machine module!

supports both Bicep and Terraform for IaC modules. Due to Terraform’s versatility with close to five thousand providers, it is used in the Bootstrap phase even if you choose Bicep as your IaC language.

creates a continuous delivery environment in either Azure DevOps or GitHub. Alternatively, you could ‘bring your own VCS’ as well and use other services like GitLab, but you need to configure such integration yourself.

uses a new ALZ PowerShell module that gathers user input, configures the bootstrap environment, and ensures you can deploy the platform landing zones from it.

Its main objective, in my view, is to accelerate, rather than to provide every conceivable design and configuration option. This is done through abstraction and end-to-end automation.

The big picture and phases

I like this diagram, breaking down the experience to components and phases:

I would encourage you to go through the documentation and learn the details but here is my simplified version of the entire process:

Start with planning: most organizations have some footprint in Azure already (aka Brownfield), so you need to consider where you are going to ‘attach’ the new Management Group hierarchy (with policies and roles), what IaC language and VCS platform you want to use, etc.

⚠I assume you are familiar with the ALZ reference architecture, its principles, design areas, and all the design decisions you should carefully make for your production environment. Without this knowledge it is quite impossible to comprehend what the ALZ Accelerator does, and what value it provides!The documentation gives many recommendations you should consider like:

using

Tenant Root Groupas the parent management groupusing Three subscription model with separate Management, Connectivity, and Identity platform subscriptions, and deploy bootstrap resources to the Management subscription.

choosing between public or private networking for either self-hosted or Microsoft/GitHub-hosted agents (runners).

There is a comprehensive spreadsheet available containing all configuration settings you could use and later fill out configuration files.

Validate you have all pre-requisites in place: from tools installed on your admin machine (PWSH, Az CLI, Git), configuration of your chosen VCS (things like PAT tokens and permissions), to having empty Azure subscriptions for platform LZs and an account with sufficient roles assigned).

💡Tip: Once you download the ALZ module, there is a handyTest-AcceleratorRequirementscmdlet you could use to validate your machine.Bootstrap phase: Here you install the ALZ module and based on chosen VCS and IaC combination, you follow additional guidance to create a local folder structure and prepare a bootstrap configuration file.

Once ready, you can trigger the bootstrap by calling

Deploy-Acceleratorcmdlet with a few parameters. Behind the scenes, it calls Terraform to provision a collection of resources both in Azure and your chosen VCS side.To give you a concrete example, when choosing Bicep with Azure DevOps and public networking scenario, it creates: two user assigned managed identities with federated credentials, a project, a repository with environments, a group, pipelines for CI and CD, service connections with workload identity federation, and a variable group. There could be some additional components based on the choices you make and declare in the bootstrap configuration yaml file. There are several sample config files available to pick and modify like this one for Azure DevOps and Bicep.

Note: This phase uses IaC module stored in the Azure/accelerator-boostrap-modules repository on GitHub. You can see all the code if you are curious about how it was designed and written.

ℹThe Bootstrap is meant to be executed once and when completed, you would leverage the CD pipeline (workflow) in your chosen VCS to deploy the platform LZs and maintain their lifecycle there.When you check your terminal output to see that the bootstrap went well, it is time to head to your new repository. That’s where you Run the continuous delivery pipeline for the first time and use Starter Modules to deploy a specific platform landing zone configuration.

Customization

There are several ways for customizing your ALZ environment and its configuration:

Bootstrap configuration file (e.g.,

input.yaml) - mainly used to provide config choices for provisioning VCS components and Azure resources that support the CI/CD workflow. Some of this configuration is reused in the Starter module (and copied over to theconfig/parameters.jsonfile).Names for bootstrap resources in Azure use a

service nameandenvironment nameparameter values. If you want to use an alternative naming convention for resources, it can be overridden by following this guidance.Two additional options are available, but only for Terraform:

Platform Landing Zone Configuration File (for TF ALZ Starter module)

Platform Landing Zone Library (lib) Folder. (for Platform Landing Zone Starter module)

You could fork and modify the modules directly as described in this Consumer Guide. This will, however, be out of sync with the CD pipeline created by the Accelerator since it always pulls the entire modules package from its upstream (

Azure/ALZ-Bicep).

There might be additional areas for changing the configuration stored in the repository once you pass the Bootstrap phase (before you run the CD pipeline for the first time), but I couldn’t find any reference to it in the documentation:

Modify the

/config/parameters.jsonfile and add module variables to further customize the environment.Modify the parameter files (in JSON format) in the

config/custom-parametersfolder. By inspecting the workflow definition files it does seem they are used for every CD run, but the documentation doesn’t mention it (or at least I couldn’t find it).

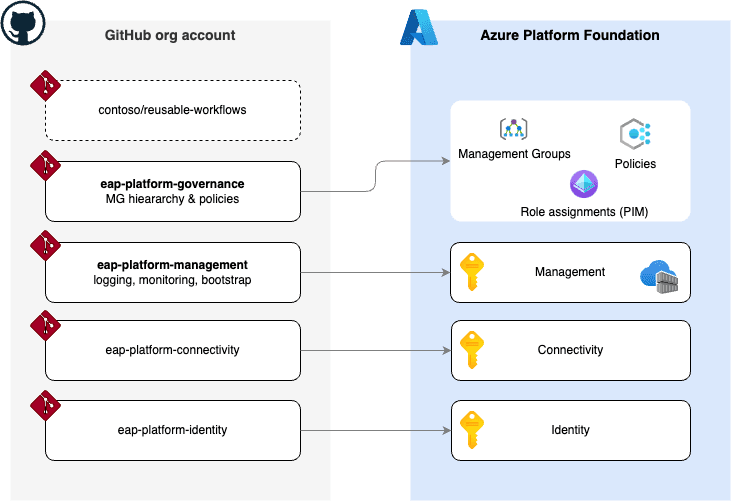

Code repositories in the ALZ universe

I always like to build a mental map of different ‘building blocks’ / code sources and how they relate to one another. The diagram I made focuses on the Bicep path of the Accelerator (with some components being common for the Terraform path):

Your journey with the ALZ Accelerator should begin with the new documentation page - Azure Landing Zones Documentation. It is available using the handy aka.ms/alz/accelerator shortcut and it is sourced from the

Azure\Azure-Landing-Zonesrepository.You will download and install the ALZ module from the PowerShell Gallery. The source for the code is in the

Azure\ALZ-PowerShell-Modulerepository.During the Bootstrap phase, the automation is leveraging the Bootstrap modules hosted in the

Azure/accelerator-bootstrap-modulesrepository. It uses Terraform due to its ability to provision resources in Azure, Azure DevOps, and GitHub, but it doesn’t limit your options for selecting Bicep as your IaC language for the Run phase.When you trigger the continuous delivery pipeline (workflow) in your VCS repository or a project, as part of the flow it downloads the latest release (zip) of ALZ-Bicep modules package (for Terraform, it is a different source repository). That one is maintained in the

Azure/ALZ-Biceprepo.When you inspect that repository and its code, you will see that all Bicep modules (except one:

ptn/network/private-link-private-dns-zones) are stored locally.Being able to use and reference these modules directly from the Microsoft Public Bicep Registry through the Azure Verified Modules initiative is on the roadmap, which is why there isn’t a “link” between the ALZ-Bicep repo and the AVM repository for Bicep -

Azure/bicep-registry-modules- in my diagram.You can even spot traces of AMV’s predecessor CARML (in the

CRMLfolder).

I understood from one ALZ external community call that when it comes to custom policy definitions for ALZ, the upstream repository is still the original

Azure/Enterprise-Scaleand there is a workflow making sure the downstream ALZ-Bicep is updated on a regular basis. This might not be entirely correct!As I mentioned above, the Bicep module for Subscription Vending -

Azure/bicep-lz-vending- is not directly integrated with the Accelerator.

New vs. Classic Accelerator

For those of you who tried the earlier versions of the ALZ Bicep Accelerator, you might have seen this disclaimer in the ALZ Bicep Wiki:

The Classic Accelerator used a collection of workflows and respective PowerShell scripts to manage parts of the Platform Landing Zone.

The new ALZ Accelerator uses only two pipelines (workflows): CI and CD. It also leverages reusable workflows (or pipeline templates).

Also, all parts of the platform are treated as steps and the provisioning is sequenced inside one job, as shown in the screenshot below from one of my test deployments with Azure DevOps and Bicep combo:

My implementation

I planned to deploy the ALZ reference architecture in our internal tenant (in a Brownfield fashion) and at the same time assess the new Accelerator and its new features. It seemed to be a great fit, especially since I wanted to use IaC and the gitops style of managing the landing zones.

For the Bootstrap phase, I selected:

Bicep as

iac_typealz-github as

bootstrap_module_nameComplete

starter_module_nameTenant Root Groupas the Parent MGI went with the ‘Three subscriptions model’ and deployed the boostrap resources in the Management subscription

I didn’t see compelling reasons for using private networking and self-hosted runners. This would have been different if I chose Terraform as IaC, even though there are two ways for keeping the state file in a secured blob storage - Large Runners or Azure VNet integration.

What didn’t work for us

While I was studying the composition of this solution and its customization options, I quickly realized that I would need to deviate from my desired “layout” and that several of my key requirements are not compatible with the new Accelerator and its design:

Store the configuration of each platform landing zone in a separate code repository:

I want to delegate access to different platform LZs to separate teams. The Accelerator uses the ‘monorepo’ approach for storing configuration, so delegating the responsibility for parts of the configuration to different teams would be cumbersome, especially since it uses one primary configuration file (

config/parameters.json).Such distribution to several repos and respective workflows could simplify and speed up redeployments and configuration changes.

Be true to the code-first infrastructure provisioning principle and treat the code repository as a single source of truth:

- The Accelerator doesn’t honor this principle, since it provisions the bootstrap resources (managed identities, ACR, etc.) in ‘fire and forget’ fashion. I know this is a classic ‘chicken and egg’ problem, but it would be great to be able to ‘import’ those resources to the Management LZ repo.

I prefer having a more direct access to IaC deployment files and its parameters over such high level of abstraction:

- I wanted to be able to add additional components to platform LZs that are either not part of the reference implementation (e.g., replica domain controllers, Azure VNet Manager) or aren’t implemented in a flexible enough way (e.g., Azure Firewall policies).

Path we are (probably) taking

At the time of writing this blog post, we haven’t completely decided what implementation approach we are going to choose but considering the requirements I described above, we will most likely:

avoid using the ALZ Accelerator due to the abovementioned reasons

create four GitHub repositories (not counting the existing repo for reusable workflows we already have in the organization) and map them to three Platform subscriptions:

fork the ALZ Bicep modules from the

Azure/ALZ-Biceprepo to a Private Modules Library and publish them in our Private Bicep Registry.💡I wrote an article about building such a library, you can find it here.follow the Module Deployment Sequencing guidance but split it in a way to match it with the repos-subs mapping

Obviously, this requires a lot of additional engineering work, but this approach could give us the needed level of flexibility and align with the overall Azure Landing Zone Journey. Once the Project Uplift is complete and ALZ-Bicep modules are converted to AVM modules, we could easily switch the reference to br/public in our deployment templates.

Conclusion

The new ALZ Accelerator is a very robust and interesting attempt to automate the deployment and lifecycle management of platform landing zones beyond what was possible previously with the original accelerators (the Portal experience, JSON ARM, Terraform, and Bicep).

The level of abstraction and the way it pulls the entire package of ALZ modules might not be a good fit for every customer, but it has clear benefits over a more complex DYI approach.

My wish is it eventually switches to a model, that it leverages AVMs both for Terraform and Bicep. The good news is it is on the roadmap already and there is an open issue in the Azure/ALZ-Bicep repo: